Note: this is the translation of a post that was originally published in French: Introduction à la complexité algorithmique – 2/2.

In the previous episode, I explained two ways to sort books, and I counted the elementary operations I needed to do that, and I estimated the number of operations depending on the number of books that I wanted to sort. In particular, I looked into the best case, worst case and average case for the algorithms, depending on the initial ordering of the input. In this post, I’ll say a bit more about best/worst/average case, and then I’ll refine the notion of algorithmic complexity itself.

The “best case” is typically analyzed the least, because it rarely happens (thank you, Murphy’s Law.) It still gives bounds of what is achievable, and it gives a hint on whether it’s useful to modify the input to get the best case more often.

The “worst case” is the only one that gives guarantees. Saying that my algorithm, in the worst case, executes in a given amount of time, guarantees that it will never take longer – although it can be faster. That type of guarantees is sometimes necessary. In particular, it allows to answer the question of what happens if an “adversary” provides the input, in a way that will make the algorithm’s life as difficult as possible – a question that would interest cryptographers and security people for example. Having guarantees on the worst case means that the algorithm works as desired, even if an adversary tries to make its life as miserable as possible. The drawback of using the worst case analysis is that the “usual” execution time often gets overestimated, and sometimes gets overestimated by a lot.

Looking at the “average case” gives an idea of what happens “normally”. It also gives an idea about what happens if the algorithm is repeated several times on independent data, where both the worst case and the best case can happen. Moreover, there is sometimes ways to avoid the worst cases, so the average case would be more useful in that case. For example, if an adversary gives me the books in an order that makes my algorithm slow, I can compensate that by shuffling the books at the beginning so that the probability of being in a bad case is low (and does not depend on my adversary’s input). The drawback of using the average case analysis is that we lose the guarantee that we have on the worst case analysis.

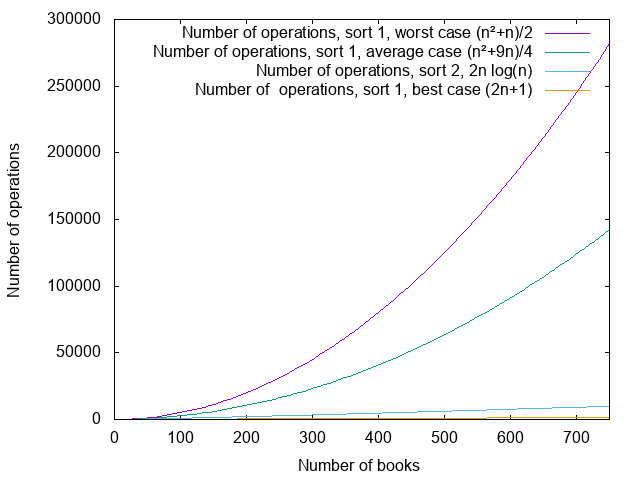

For my book sorting algorithms, my conclusions were as follows:

- For the first sorting algorithm, where I was searching at each step for a place to put the book by scanning all the books that I had inserted so far, I had, in the best case,

operations, in the worst case,

operations, and in the average case,

. operations.

- For the second sorting algorithm, where I was grouping book groups two by two, I had, in all cases,

operations.

I’m going to draw a figure, because figures are pretty. If the colors are not that clear, the plotted functions and their captions are in the same order.

It turns out that, when talking about complexity, these formulas (,

,

,

) would not be the ones that I would use in general. If someone asked me about these complexities, I would answer, respectively, that the complexities are

,

(or “quadratic”),

again, and

.

This may seem very imprecise, and I’ll admit that the first time I saw this kind of approximations, I was quite irritated. (It was in physics class, so I may not have been in the best of moods either.) Since then, I find it quite handy, and even that it makes sense. The fact that “it makes sense” has a strong mathematical justification. For the people who want some precision, and who are not afraid of things like “limit when x tends to infinity of blah blah”, it’s explained there: http://en.wikipedia.org/wiki/Big_O_notation. It’s vastly above the level of the post I’m currently writing, but I still want to justify a couple of things; be warned that everything that follows is going to be highly non-rigorous.

The first question is what happens to smaller elements of the formulas. The idea is that only keep what “matters” when looking at how the number of operations increases with the number of elements to sort. For example, if I want to sort 750 books, with the “average” case of the first algorithm, I have . For 750 books, the two parts of the sum yield, respectively, 140625 and… 1687. If I want to sort 1000 books, I get 250000 and 2250. The first part of the sum is much larger, and it grows much quicker. If I need to know how much time I need, and I don’t need that much precision, I can pick

and discard

– already for 1000 books, it contributes less than 1% of the total number of operations.

The second question is more complicated: why do I consider as identical and

, or

and

? The short answer is that it allows to make running times comparable between several algorithms. To determine which algorithm is the most efficient, it’s nice to be able to compare how they perform. In particular, we look at the “asymptotic” comparison, that is to say what happens when the input of the algorithm contains a very large number of elements (for instance, if I have a lot of books to sort) – that’s where using the fastest algorithm is going to be at most worth it.

To reduce the time that it takes an algorithm to execute, I have two possibilities. Either I reduce the time that each operation takes, or I reduce the number of operations. Suppose that I have a computer that can execute one operation by second, and that I want to sort 100 elements. The first algorithm, which needs operations, finishes after 2500 seconds. The second algorithm, which needs

operations, finishes after 1328 seconds. Now suppose that I have a much faster computer to execute the first algorithm. Instead of needing 1 second per operation, it’s 5 times faster, and can execute an operation in 0.2 seconds. That means that I can sort 100 elements in 500 seconds, which is faster than the second algorithm on the slower computer. Yay! Except that, first, if I run the second algorithm of the second computer, I can sort my elements five times faster too, in 265 seconds. Moreover, suppose now that I have 1000 elements to sort. With the first algorithm on the fast computer, I need

seconds, and with the second algorithm on the much slower computer,

seconds.

That’s the idea behind removing the “multiplying numbers” when estimating complexity. Given an algorithm with a complexity “” and algorithm with a complexity “

“, I can put the first algorithm on the fastest computer I can: there will always be a number of elements for which the second algorithm, even on a very slow computer, will be faster than the first one. The number of elements in question can be very large if the difference of speed of the computers is large, but since large numbers are what I am interested in anyway, that’s not a problem.

So when I compare two algorithms, it’s much more interesting to see that one needs “something like ” operations and one needs “something like

” operations than to try to pinpoint the exact constant that multiplies the

or the

.

Of course, if two algorithms need “something along the lines of operations”, asking for the constant that is multiplying that

is a valid question. In practice, it’s not done that often, because unless things are very simple and well-defined (and even then), it’s very hard to determine that constant exactly, depending on how you implement it with a programmation language. It would also require to ask exactly what an operation is. There are “classical” models that allow to define all these things, but linking them to current programming languages and computers is probably not realistic.

Everything that I talked about so far is function of , which is in general the “size of the input”, or the “amount of work that the algorithm has to do”. For books to sort, it would be the number of books. For graph operations, it would be the number of vertices of graphs, and/or the number of edges. Now, as “people who write algorithms”, given an input of size

, what do we like, what makes us frown, what makes us run very fast in the other direction?

The “constant time algorithms” and “logarithmic time algorithms” (whose numbers of operations are, respectively, a constant that does not depend on or “something like

“) are fairly rare, because with

operations (or a constant number of operations), we don’t even have the time to look at the whole input. So when we find an algorithm of that type, we’re very, very happy. A typical example of a logarithmic time algorithm is searching an element in a sorted list. When the list is sorted, it is not necessary to read it completely to find the element that we’re looking for. We can start checking if it’s before or after the middle element, and search in the corresponding part of the list. Then we check if it’s before or after the middle of the new part of the list, and so on.

We’re also very happy when we find a “linear time algorithm” (the number of operations is “something like “). That means that we read the whole input, make a few operations per element of the input, and bam, done.

is also usually considered as “acceptable”. It’s an important bound, because it is possible to prove that, in standard algorithmic models (which are quite close to counting “elementary” operations), it is not possible to sort

elements faster than with

operations in the general case (that is to say, without knowing anything about the elements or the order in which they are). There are a number of algorithms that require, at some point, some sorting: if it is not possible to get rid of the sorting, such an algorithm will also not get below

operations.

We start grumbling a bit at ,

, and to grumble a lot on greater powers of

. Algorithms that can run in

operations, for some value of

(even 1000000), are called “polynomial”. The idea is that, in the same way that a

algorithm will eventually be more efficient than a

algorithm, with a large enough input, a polynomial algorithm, whatever

, will be more efficient than a

-operation algorithm. Or than a

-operation algorithm. Or even than a

-operation algorithm.

In the real life, however, this type of reasoning does have its limits. When writing code, if there is a solution that takes 20 times (or even 2 times) less operations than another, it will generally be the one that we choose to implement. And the asymptotic behavior is only that: asymptotic. It may not apply for the size of the inputs that are processed by our code.

There is an example I like a lot, and I hope you’ll like it too. Consider the problem of multiplying matrices. (For people who never saw matrices: they’re essentially tables of numbers, and you can define how to multiply these tables of numbers. It’s a bit more complicated/complex than multiplying numbers one by one, but not that much more complicated.) (Says the girl who didn’t know how to multiply two matrices before her third year of engineering school, but that’s another story.)

The algorithm that we learn in school allows to multiply to matrices of size with

operations. There exists an algorithm that is not too complicated (Strassen algorithm) that works in

operations (which is better than

). And then there is a much more complicated algorithm (Coppersmith-Winograd and later) that works in

operations. This is, I think, the only algorithm for which I heard SEVERAL theoreticians say “yeah, but really, the constant is ugly” – speaking of the number by which we multiply that

to get the “real” number of operations. That constant is not very well-defined (for the reasons mentioned earlier) – we just know that it’s ugly. In practice, as far as I know, the matrix multiplication algorithm that is implemented in “fast” matrix multiplication librairies is Strassen’s or a variation of it, because the constant in the Coppersmith-Winograd algorithm is so huge that the matrices for which it would yield a benefit are too large to be used in practice.

And this funny anecdote concludes this post. I hope it was understandable – don’t hesitate to ask questions or make comments 🙂

3 thoughts on “Introduction to algorithmic complexity – 2/2”