A while ago, I bought an excellent book, called Drinking French, by David Lebovitz. It’s not strictly a cocktail book because there’s lots of stuff in it, but I’ll admit that I’ve only tested the cocktail recipes so far.

I have also, since then, bought another excellent book, called Cocktail Codex, also a staple next to my liquor cabinet.

These two books have a few common issues:

- their (paper) index does not make it easy to search for a cocktail that have two distinct ingredients (apart from looking at the index and finding the intersection),

- their index is not complete and, in particular, does not contain “trivial” ingredients such as lemon juice or simple syrup,

- their index does not necessarily account for substitutions I may feel confident doing,

- they don’t have a common index and I’d need to have a look at both to make decisions.

So I typically find myself in a situation where I have limes, and no idea what I can make with them, except it’d be nice to have something with gin. Also, I’m not that picky and probably if you give me a recipe with lemons, I’ll put my limes in it instead and call it good enough. So, the problem that I was trying to solve was: “given a set of cocktail ingredients, give me ideas for what I can make with them, allowing for some fuzziness in the exact research”.

With that problem in the back of my mind, roughly at the same time, I read Index, A History of the, by Dennis Duncan (a book about the art of indexing), and I became somewhat fascinated by Wikibase (the Mediawiki-base software backing Wikidata, which handles structured data). Things kind of clicked to “WHAT IF I re-indexed the books in a Wikibase instance, I added some structure to the ingredients for the fuzziness, and I made SPARQL queries to get exactly what I want?”

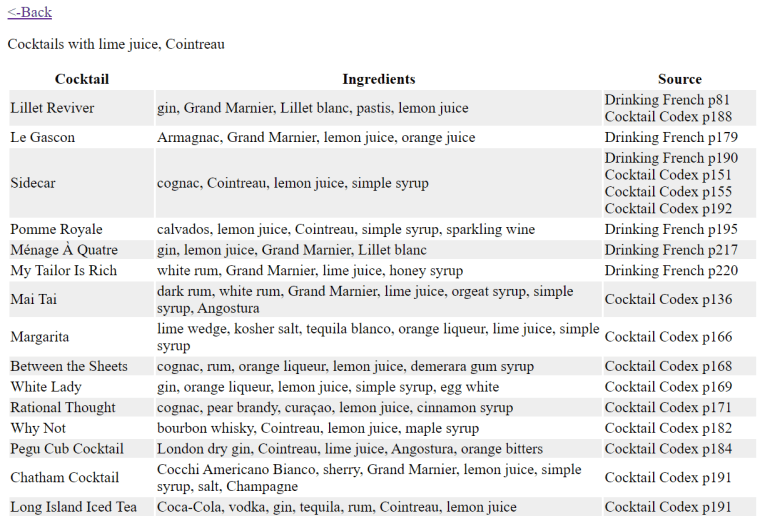

So I did that – I installed Wikibase, and I started re-indexing. I added some structure to the data by adding “subclasses of” and “instances of” and “can be substituted by” and “such as”, and it was glorious. Then I gave some thought about the exact query I was interested in, and I ended up with something along the lines of “given a list of ingredients, for each of them, get a list of substitutes, and give me a recipe that contains at least of one substitute of each ingredient of the list”, where “substitute” is defined as either the ingredient itself, something that is explicitly defined as a substitute, or something that is (transitively) either a refinement or a larger category of the ingredient. The reasoning there is that, if I input “London dry gin”, I want to get the recipes that have “gin” (without qualifier), but also the ones that have a specific brand of London dry gin.

I bundled a small Mediawiki extension called CocktailSearch to be able to query my database and, for a while, I was happy. Here’s an early version of that interface (I had added the page number in the meantime too!)

And then, doubts crept in. My Wikibase install was run via the Docker images. It worked pretty well, but I was a bit unhappy with running 9 Docker images, including one that kept restarting (I probably could have fixed that one, but eh), on a machine (my home Windows desktop computer) that wasn’t really suited for it. I’m not much of a system administrator and I have 0 confidence in my Docker skills in general: the whole setup was making me a bit nervous. Migrating my cocktail index to a more persistent setup became my new project.

I considered multiple options, including “just moving the Docker images to another machine”. I finally settled on trying to run Wikibase, without the Docker images, on a Raspberry Pi that we have laying around. I actually went pretty far in the installation, and I think it could have worked out. But again, I got really nervous about the durability of the setup – Raspberry Pis are not known for being particularly good at data persistence. On top of that, getting from something that “mostly works” to something that I could plug in and access three minutes later with everything running looked like a goal that I might be able to eventually reach, but software rot was a real concern. I felt stuck.

I talked about that with my husband, who pointed out that my usage of SPARQL was actually fairly limited (it is, in fact, limited to a single large query), that my data was actually very very small (maybe a thousand records), and that I could maybe… not use SPARQL, and then not need the whole Wikibase machinery either. I was not convinced at first, because killing your darlings is hard. I had invested quite a bit of fondness in that architecture, and I did like the idea of running mostly standard software. But it didn’t take that much thinking before I actually got excited about the idea of simplifying the whole project drastically.

So, I exported my data to a JSON file, and I started hacking some import script. I then looked at my imported data and at my SPARQL query, hacked some loops in PHP, and essentially called it a day: my SPARQL query and my PHP queries were returning the same results for my few test queries.

Then, came the question of completing the database – indexing takes time, and my base was (and is still) not complete (and, who knows – maybe I’ll index some more books later!). While I’m able to programmatically read the dumped Wikibase JSON, I’m definitely not able to write it by hand without a lot of tears; but continuing to run Wikibase just as an input interface felt a tad excessive. Hence, I transformed my JSON structures into a flat file format that I could easily write by hand and easily parse. I added a significant amount of validation to avoid typo-duplicates and missing item references, and I re-exported my JSON data into that new format. I double-checked that I wasn’t losing any information (I’m indexing a bit more than I need, technically, because I’m also taking notes on the glass type, for instance), and then I started trying to complete the file with new items.

I honestly thought I wouldn’t last three recipes without slapping some kind of interface/completion on that file format, but it’s going significantly better than I expected. I did modify the file format a bit to make it easier to edit manually, at the cost of reading through the file twice when parsing it (I can live with that; and if I couldn’t, I could optimize there, but why bother :P). It feels like it’s enough: I have a validation script that runs fast enough with good enough error messages that I can input things and correct them more quickly and more pleasantly than I did with the Wikibase interface.

Speaking of interface, I also slapped a small web interface on the script, so that I can search with completion and have a readable output. The search completion was also far less involved than I expected: turns out the <datalist> tag does exactly what I want, assuming I pass a list of ingredients (which I can get as “transitive subclasses and instances of the ingredient item”) to the generating HTML. And there, new interface, new results – with additional data entry done in the meantime too 🙂

So anyway, that’s the genesis of the current version of my cocktail book index, which is now called LimeCockail in reference to my original “now what do I do with these limes”.

It does feel a bit of a convoluted path for something that, at the end of the day, is, like, 500 lines of PHP, give or take, but going through that path was very interesting for a variety of reasons. It did give me some hands-on experience with Wikibase (granted, without the issues that come with “running a public instance” 🙂 ), and the Wikibase RDF structure helped me define my structured data in a way that makes sense to me. I also stretched my (almost nonexistent) sysadmin muscles to try to make all of this work together, and I (re-)learnt a few things about the LAMP stack and ElasticSearch. I also got a bit more experience with SPARQL, and I touched jQuery for the first time in a long time to be able to hack the search components backed by Wikibase data. All in all, this project taught me a lot of things!

Now I just need to finish indexing the Codex… 🙂