This is the translation and update of a blog post originally written in French: “Et donc, c’est quoi, un logarithme ?”.

It’s quite hard to write a series of articles about theoretical computer science without talking about logarithms. Why? Because it’s one of the “useful” functions when one talks about algorithm complexity. So, to make sure that everyone is on the same page, this is a remedial class about logarithms. People who have bad memories about high school maths probably also have bad memories of logarithms; however, logarithms are cool.

Speaking of logarithms, I don’t know how they taught you that in your maths classes; for me, I’m pretty sure it’s been defined from the integral of the reciprocal function. STAY HERE, THAT’S NOT WHAT I’M GOING TO DO. Well, not yet. First, let me try to give some intuition about that thing.

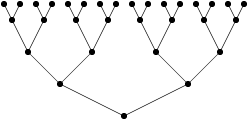

Let us first consider the figure I just successfully inserted in my blog post. I started from a point; from this point I made two branches, at the end of which I added a point; on each of these points, I added two branches, at the end of which I added a point, and so on. I could have continued like that for a while, conceptually – I’d have issues with space and fatigue (it’s going to be annoying really fast to draw points), but I think you can imagine a tree like that with as many levels as you want. Let’s also suppose, because we do computer science, that the “root” of the tree (the very first point I added on my picture at the bottom of it) is at level 0.

Now suppose that I want to count the number of “leaves” of my tree, that is to say the number of points that I have on the highest level of my tree.

It’s pretty clear that the number of leaves depends on the level at which I stop drawing my tree, and that it increases for each level. If I stop drawing at level 0, I have 1 leaf. If I stop at level 1, I multiply that by 2 (because I made two branches), so that’s 2. If I stop drawing at level 2, I multiply the number of leaves at level 1 by 2 again, so that’s 8. And for every level, I take the number of leaves from the previous level and I multiply again by two. At level 3, I’ll have 2×2×2 = 8 leaves, and at level 4, 2×2×2×2 = 16 leaves. To know the number of leaves at level , where

is the number of my level, I do

multiplications of 2 by itself, which can be written

(read “two to the power of

“).

Now suppose that I don’t want to know the number of leaves corresponding to a level, but the number of levels corresponding to a given number of leaves. For instance, I have 2 leaves: that’s level 1. 16 leaves: that’s level 4. 32 leaves, level 5. And if I have 128 leaves, that’s level 7. It gets a bit more complicated if I have, say, 20 leaves. 20 leaves, that’s level “4 and a bit”: I started drawing level 5, and then I stopped because I got too tired to finish.

This operation (finding the level for a given number of leaves) is the inverse function of the previous “power” operation (finding the number of leaves for a given level), and that’s a logarithm. I say it’s the inverse function because it allows me to “undo” the previous operation. If I take a number , I compute its power of 2, it yields

, and if I take the logarithm of that, I get

Similarly, if I have a number , that I take its logarithm, and that I compute the power of two of the result, I get

Alright, everything’s nice and shiny, but what happens if, instead of making two branches at each step, I make 3? With the same reasoning as before, at level , I have 3×3×…×3 leaves, that is

. And, well, in the same way, I can define a logarithm that would be the inverse of this

. But I do want to be able to tell one from the other, so I write the power to which they correspond as a subscript, like this:

with

and

That subscript is the “base” of the logarithm. Allow me a small remark about logarithm in base 10 (it’s also true for other bases, at least integer, of logarithms, but let me avoid that). It’s very easy to have a rough estimate of the logarithm in base 10 of a number. It’s the number of digits of said number, minus 1. We have ,

(because

); the logarithm base 10 of all the numbers between 10 and 100 is between 1 and 2. In the same way, you can say that the logarithm base 10 of 14578 is between 4 and 5, because 14578 is between

and

, which allows to conclude on the value of the logarithm. (I’m hiding a number of things here, including the reasons that make that reasoning actually correct.)

As an aside, as you may see, one interesting property of the logarithm is that it can “compress” orders of magnitude. If you want to represent on a single sheet of paper quantities that have a very large amplitude. For example, in chemistry, you may want to represent concentrations that go from to

, and on a “normal” scale, you wouldn’t be able to distinguish between

and

. You can however use a logarithmic scale, so that you represent with the same amount of space “things that happen between

and

and “things that happen between 0.01 and 0.1”. For large scales, xkcd made a very nice drawing with the observable universe seen at log scale: Height.

Back to the previous point – now I have defined the concept of “base” for my logarithm – that’s the number corresponding to the power function that I inverse to get my logarithm. The question is – what prevents me from using “exotic” bases for my logarithms? The answer is “nothing”. I can define a logarithm in base 3.5 (corresponding to the power at which I raise 3.5 to get the number for which I’m computing the logarithm base 3.5), (corresponding to the power at which I raise

to get the number for which I’m computing the logarithm base

), or even

(corresponding to… okay, you get the idea) if I want to. It’s less “intuitive” than when looking at the explanation with the tree and the number of levels (because it’s pretty hard to draw

branches), but if you see it as the inverse of the power of the same number, I hope you get the idea.

Now the next question you can ask is whether all these logarithms are somehow linked, or whether you can express them in some common way. The answer is yes. There exists the following relation between logarithms of any three bases ,

and

:

(Yes, that’s typically the kind of things that I had in my exam formular because I always get confused, especially when I’m stressed out… like I am in an exam 😉 )

Also observe that the base of the logarithm absolutely does not matter: the ratio between the logarithms of two numbers stays the same independently of the base.

The important thing to remember here is that all logarithms are equal “up to a constant”; they have the same “asymptotic behavior” (I’m giving the terms here, but I’ll write a specific post on the topic, because it’s a bit long to explain). For theoretical computer science, it’s interesting because we’re mostly interested in behaviors “up to a constant” when we’re talking about execution time or memory consumption of an algorithm. Again – I’ll come back to this later – take it as a “spoiler” of the next episodes 🙂

People from different backgrounds tend to prefer different bases for their logarithms; the three most common bases are 2, 10 and . Here, I feel that it’s possible someone just read that and went “wait, what?”. As far as powers go, there is a “special” one: the powers of

.

is approximately equal to 2.71828, and the function

has its own name and its own notation: it’s the exponential function, and

. It’s special because of an interesting property: it is equal to its derivative. And because the exponential function is often used, its inverse, the “natural logarithm” (logarithm in base

) is also used a lot, and we write

. It’s also been brought to my attention that some conventions (and, I presume, authors) use

and use

. Wikipedia has more opinions on the question.

It also turns out that the natural logarithm is related to the reciprocal function . Formally, we write that

And this is what it means, graphically:

The red curve represents the function . The grey zone corresponds here to the integral from 1 to 6: the area of that zone is equal to

. And you can represent the natural logarithm of any value (greater than 1) by the area of the zone between the x-axis and the

curve from 1 to that value. Side remark: this area is equal to 1 when you take it from 1 to

(because

for all

, so

).

And, to conclude, a nice property of the logarithm function: you can write (in any logarithm base):

This is probably easiest to see via the power functions. Let us write things down. We have, on the one hand:

and, on the other hand,

Since, if , then

, putting everything together yields the first result. The second equality (with the minuses) can be derived in exactly the same way (and left as an exercise to the reader 😉 ).

Note that it’s because of that kind of property that logarithms were used in the first place. If you have a large table with a lot of “number/logarithm” correspondances, and if you want to multiply two large numbers easily, you look up in the table the logarithms of both numbers, you add them (which is much easier than to multiply them), and you look at the corresponding value (by looking at the table in the other direction) to get the value of the multiplication. Historically, that kind of table appeared in the 18th century (thank you M. Napier) to make astronomical computation easier; slide rules also work on that principle; and all of this only started to disappear when, quite recently, mechanical and later electronic calculators appeared…

Anyway. Logarithms are cool. And now I hope you’re also convinced of that 🙂

One thought on “So… What’s a logarithm?”